Programmer Helper

This is a basic site for dealing with programming help. I will just post anything, on programming, that I have learnt something new. Guess that would be useful to you also. I'm going to use this as a repository for storing valuable programming tips, that I suddenly find it difficult to locate it, either in my PC or in the Net. Happy learning.. :o). Check out my old blog .. http://edwardanil.blogspot.com

Search This Blog

Tuesday, September 17, 2019

Monday, January 12, 2015

25 Math Concepts You Absolutely Need to Know

- The area of a circle is A = πr2, where r is the radius of the circle.

- The circumference of a circle is C = 2πr, where r is the radius of the circle. The circumference can

also be expressed as πd, because the diameter is always twice the radius.

- The area of a rectangle is A = lw, where l is the length of the rectangle and w is the width of the

rectangle.

- The area of a triangle is A = ½bh, where b is the base of the triangle and h is the height of the

triangle.

- The volume of a rectangular prism is V = lwh, where l is the length of the rectangular prism, w is

the width of the rectangular prism, and h is height of the rectangular prism.

- The volume of a cylinder is V = πr2h, where r is the radius of one of the bases of the cylinder and

h is the height of the cylinder.

- The perimeter is the distance around any object.

- The Pythagorean Theorem only applies to right angles and states that c2 = a2 + b2, where c is the hypotenuse of the triangle and

a and b are two sides of the triangle.

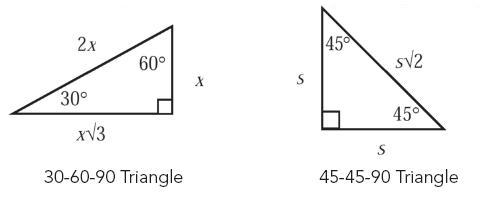

- The following are angle measures and side lengths for Special Right Triangles:

- In an equilateral triangle, all three sides have the same length, and each of the angles equals 60°.

- In an isosceles triangle, two sides have the same length, and the angles opposite those sides are

congruent.

- The complete arc of a circle has 360°.

- A straight line has 180°.

- A prime number is any number that can only be divided by itself and 1.

- Squaring a negative number yields a positive result.

- To change any fraction to a decimal, divide the numerator by the denominator.

- If two numbers have one or more divisors in common, those are the common factors of the

numbers.

- To calculate the mean, or average, of a list of values, divide the sum of the values by the number

of values in the list.

- The median is the middle value of a list of numbers that is in either ascending or descending

order.

- The mode is the value that appears the greatest number of times in a list.

- A ratio expresses a mathematical comparison between two quantities. (1/4 or 1:4)

- A proportion is an equation involving two ratios. (1/4 = x/8 or 1:4 = x:8)

- When multiplying exponential expressions with the same base, add the exponents.

- When dividing exponential expressions with the same base, subtract the exponents.

- When raising one power to another power, multiply the exponents.

Tuesday, January 06, 2015

How to make your Wordpress site secure

HOSTING

• Look for hosts with experience hosting WordPress sites.

• Look for hosts with solid support.

• Look for hosts that are transparent: who communicate quickly and post issues online.

• Make sure your host does regular backups that you can access.

• Call your potential host to find out which versions of Apache web server, MySQL, and PHP they’re running. Check the version release dates with a Google search.

• Ask your host for written documents containing their server data backup, failover, and update or maintenance policy. If they don’t have them, find another host.

• Recommended hosts: WP Engine and ZippyKid

HARDENING AND PROTECTING WORDPRESS

• Keep WordPress, themes, and plugins up to date. Always. Period.

• If you’re unsure about how to update WordPress, themes, and plugins, hire someone to do it for you.

• Backup your site before you update WordPress, themes, and/or plugins.

• Disable unused user accounts.

• Never use “Admin” as your username. Ever.

• Grant users the minimum privilege they need to do their jobs.

• Require strong passwords.

• Use 1Password or KeePass to create strong passwords.

• Use a different, strong password for every site log in.

• Lock down the WordPress admin dashboard (/wp-admin) using an .htaccess file.

• Use SFTP to access your web host.

• Enable SSL on your WP install.

• Change your passwords once a month. Set a reminder in your calendar if you have to.

• Do backups. Recommended: BackupBuddy, VaultPress

• Set file permissions at 644 and 755 for folders.

• Ensure that the permissions on wp-config.php are not world readable especially in a shared hosting environment.

• Consider adding HTTP authentication to your /wp-admin/ area.

• Read Sucuri.net’s blog.

• Read Google’s security blog.

CHOOSING THE RIGHT PLUGIN

• Look for properly sanitized data and MySQL statements, unique namespace items, use of the Settings API for any plugin settings or options.

• Look for plugins that use nonces instead of browser cookies.

• Check out how quickly the developer responds to support requests.

• Check out forum threads to see how well the plugin is supported.

• Is the developer a known and respected member of the community?

• Look for a plugin that does one or two tasks really well.

• If two plugins do similar things, choose the one with the higher download count.

YOU’VE BEEN HACKED. NOW WHAT?

• Let your web host know what happened.

• Make a full backup of the infected site. It’s helpful for reviewing what happened and in case you mess up something during the repair.

• Change all of your passwords and the authentication keys in the wp-config.php.

• Remove any old themes, plugins, and unused code from your server.

• Update all code on your server. Re-install WordPress so all of the WordPress files are overwritten with fresh copies.

• Reinstall themes or plugins with fresh copies to make sure no malicious code was inserted.

• Check that the file permissions on your files are correct, especially wp-config.php and uploads.

• Remove the rogue code and make sure you check all sites on your hosting account. There are tools that can help scan and clean the infection such as VaultPress. Exploit Scanner also scans for certain exploits.

• If you don’t have the ability to fix the infected files the best thing to do is restore from a recent clean backup.

• Check your server access logs. Search for any bad file names that you found on your server, patterns passed as query strings, or dates/times that may clue you in to when the attack happened.

Please visit my other blogs too: http://edwardanil.blogspot.com for information and http://netsell.blogspot.com for internet marketing. Thanks !!

Sunday, January 15, 2012

Tips on how to avoid or resolve deadlocking on your SQL Server

- Using SQL Profiler

- Using System_health extended event(It highly depends on the ring buffer)

- Using Server side trace (DBCC TRACEON (1204) )

- Using SQL Error Log with a trace

- Predefined notification functionalities to log the deadlock info using service broker, extended events etc.

- Ensure the database design is properly normalized.

- Have the application access server objects in the same order each time.

- During transactions, don’t allow any user input. Collect it before the transaction begins.

- Avoid cursors.

- Keep transactions as short as possible. One way to help accomplish this is to reduce the number of round trips between your application and SQL Server by using stored procedures or keeping transactions with a single batch. Another way of reducing the time a transaction takes to complete is to make sure you are not performing the same reads over and over again. If your application does need to read the same data more than once, cache it by storing it in a variable or an array, and then re-reading it from there, not from SQL Server.

- Reduce lock time. Try to develop your application so that it grabs locks at the latest possible time, and then releases them at the very earliest time.

- If appropriate, reduce lock escalation by using the ROWLOCK or PAGLOCK.

- Consider using the NOLOCK hint to prevent locking if the data being locked is not modified often.

- If appropriate, use as low of an isolation level as possible for the user connection running the transaction.

- Consider using bound connections.

- Adding missing indexes to support faster queries

- Dropping unnecessary indexes which may slow down INSERTs for example

- Redesigning indexes to be "thinner", for example, removing columns from composite indexes or making table columns "thinner" (see below)

- Adding index hints to queries appropriately(I dont prefer this mostly, but it has got its own scope)

- Redesigning tables with "thinner" columns like smalldatetime vs. datetime or smallint vs. int

- Modifying the stored procedures to access tables in a similar pattern

- Keeping transactions as short and quick as possible: "mean & lean"

- Removing unnecessary extra activity from the transactions like triggers

- Removing JOINs to Linked Server (remote) tables if possible

- Implementing regular index maintenance; usually weekend schedule suffices; use FILLFACTOR = 80 for dynamic tables (Needs a good evaluation)

Tuesday, April 26, 2011

Cloud development: 9 gotchas to know before you jump in

Application development and testing in the cloud are gaining popularity, as more businesses launch public and private cloud computing initiatives. Cloud development typically includes integrated development environments, application lifecycle management components (such as test and quality management, source code and configuration management, continuous delivery tools), and application security testing components.

Although technology executives and developers with experience in cloud-based development say there are clear benefits to developing in these environments -- such as costs savings and increased speed to market -- they also caution that there are challenges and surprises to look out for.

[ Get the no-nonsense explanations and advice you need to take real advantage of cloud computing in InfoWorld editors' 21-page Cloud Computing Deep Dive PDF special report. | Stay up on the cloud with InfoWorld's Cloud Computing Report newsletter. ]

Despite the learning curve, cloud development is appealing

Despite the potential challenges, for many organizations application development in the cloud rather than sticking with traditional methods makes sense, for the same reasons that cloud computing in general makes sense: elasticity of resources and cost, and reduced operational complexity, both of which lead to shorter completion time.

This article, "Cloud development: 9 gotchas to know before you jump in," was originally published at InfoWorld.com.